Postdoctoral Research Fellow

Pholpat (Big) Durongbhan

I develop reproducible quantitative methods for high-resolution biomedical imaging and time-series data, with a focus on CT, synchrotron, and microscopy imaging.My work spans validated feature extraction, interpretable modelling of structure–function relationships, and workflow automation, turning complex datasets into clinically meaningful metrics.

What are my research interests?

Research Highlights

I am an interdisciplinary researcher working at the intersection of engineering, biomedicine, and computer science. I develop quantitative image-analysis tools for high-precision, reproducible biomedical assessment.Across my training in Thailand, the UK, and Australia, I have focused on building methods that improve measurement reliability and translational relevance.

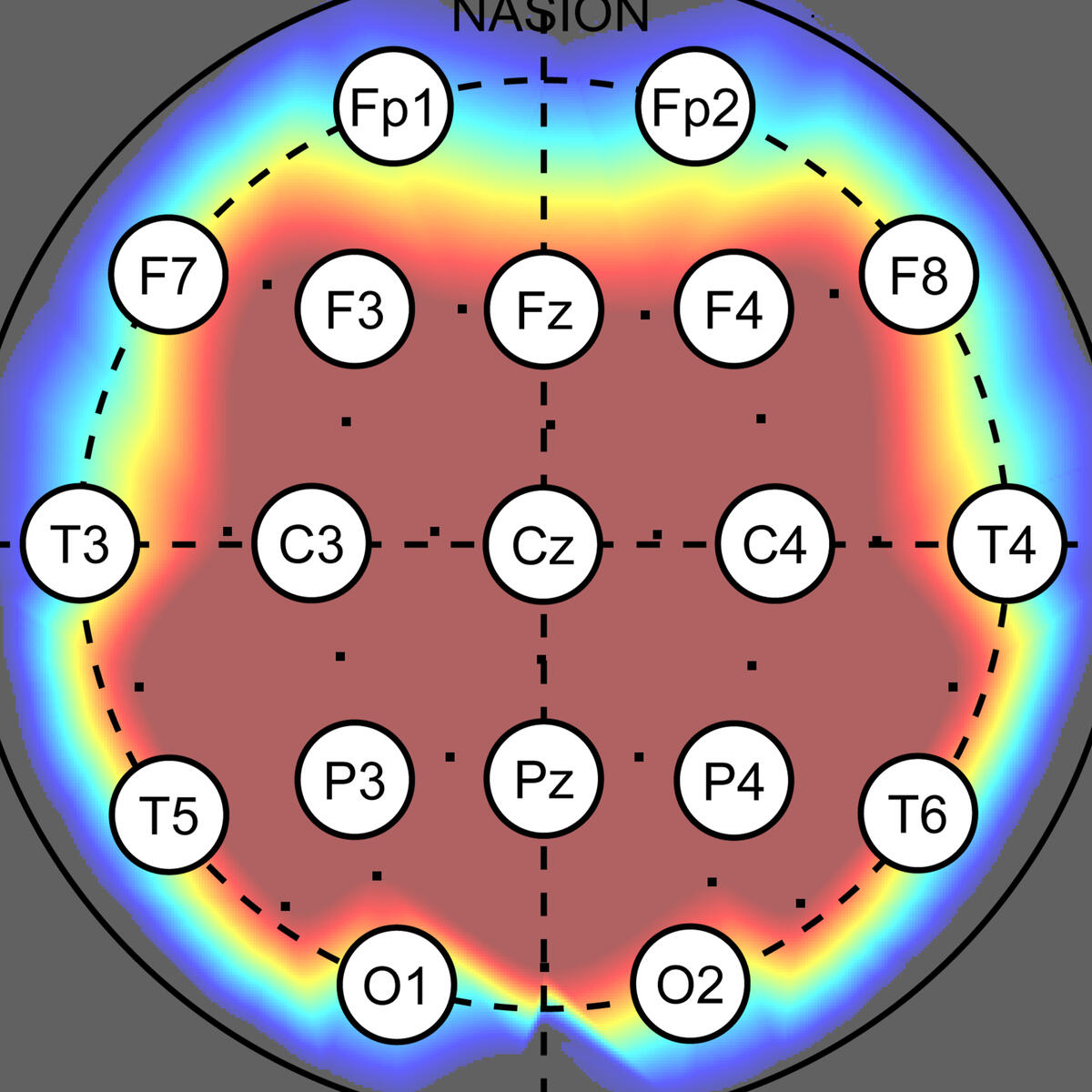

Assessment of Brain Connectivity

○ Developed machine-learning and non-linear signal analysis tools for dementia diagnosis and brain connectivity profiling (Cranfield University, Royal Hallamshire Hospital).○ Built reproducible feature-extraction and classification workflows to support robust interpretation of EEG connectivity markers.○ Produced two high-impact publications and continuing work on how sampling frequency influences machine learning classification accuracy.

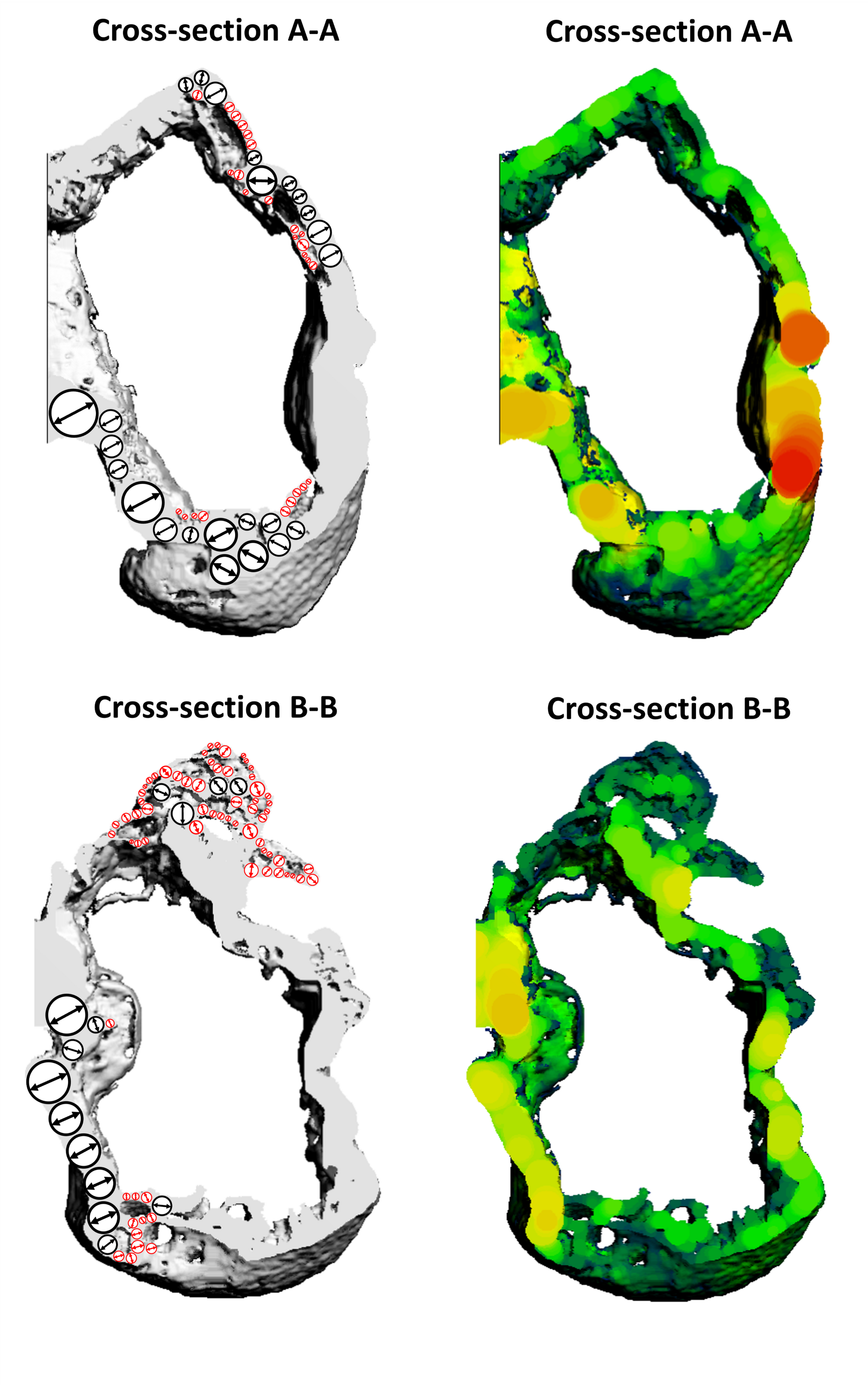

Morphological Analysis of Joint Tissues

○ Developed analysis workflows to quantify structural changes in joint tissues using contrast-enhanced and synchrotron-radiation microCT (University of Melbourne).○ Designed pipelines for reproducible morphometrics across different species and experimental protocols, with careful validation of measurement stability.○ Demonstrated robustness across contrast agents and imaging resolutions, enabling adaptable deployment in diverse osteoarthritis and joint-tissue studies.

Quantification of Cell and Matrix Morphology

○ Built image-processing pipelines for quantitative analysis of cell and matrix in cartilage tissue constructs from 3D microscopy data (Erasmus MC, Murdoch Children’s Research Institute, University of Melbourne).○ Addressed common microscopy artifacts, including depth-wise intensity attenuation and ambiguous boundaries, to improve segmentation reliability.○ Enabled precise volumetric quantification of matrix deposition for comparative analysis across conditions and experimental batches.

What funding have I secured?

Selected Research Projects & Fellowships

In addition those listed, I have received multiple competitive postdoctoral fellowship offers and collaborative facility-access awards.

2026 JSPS Postdoctoral Fellowship - Standard Program

Lead Applicant: Establishing image-driven estimation of embryonic mechanical properties for reproductive health

2024 University of Melbourne Early Career Researcher Grant

Lead Applicant: High-throughput, high-resolution image analysis platform for quantitative arthritis research

2023 ANZBMS Bone Health Foundation Grant

Co-applicant: Remodelling of the osteochondral interface using contrast-enhanced micro-computed tomography

2023 Australian Synchrotron Beamtime Grant

Co-applicant: Nanoscale Measurement of Porous Channels in the Bone-cartilage Interface of a Pre-clinical Mouse Model of Osteoarthritis using Synchrotron Radiation Phase-Contrast Imaging

What have I published?

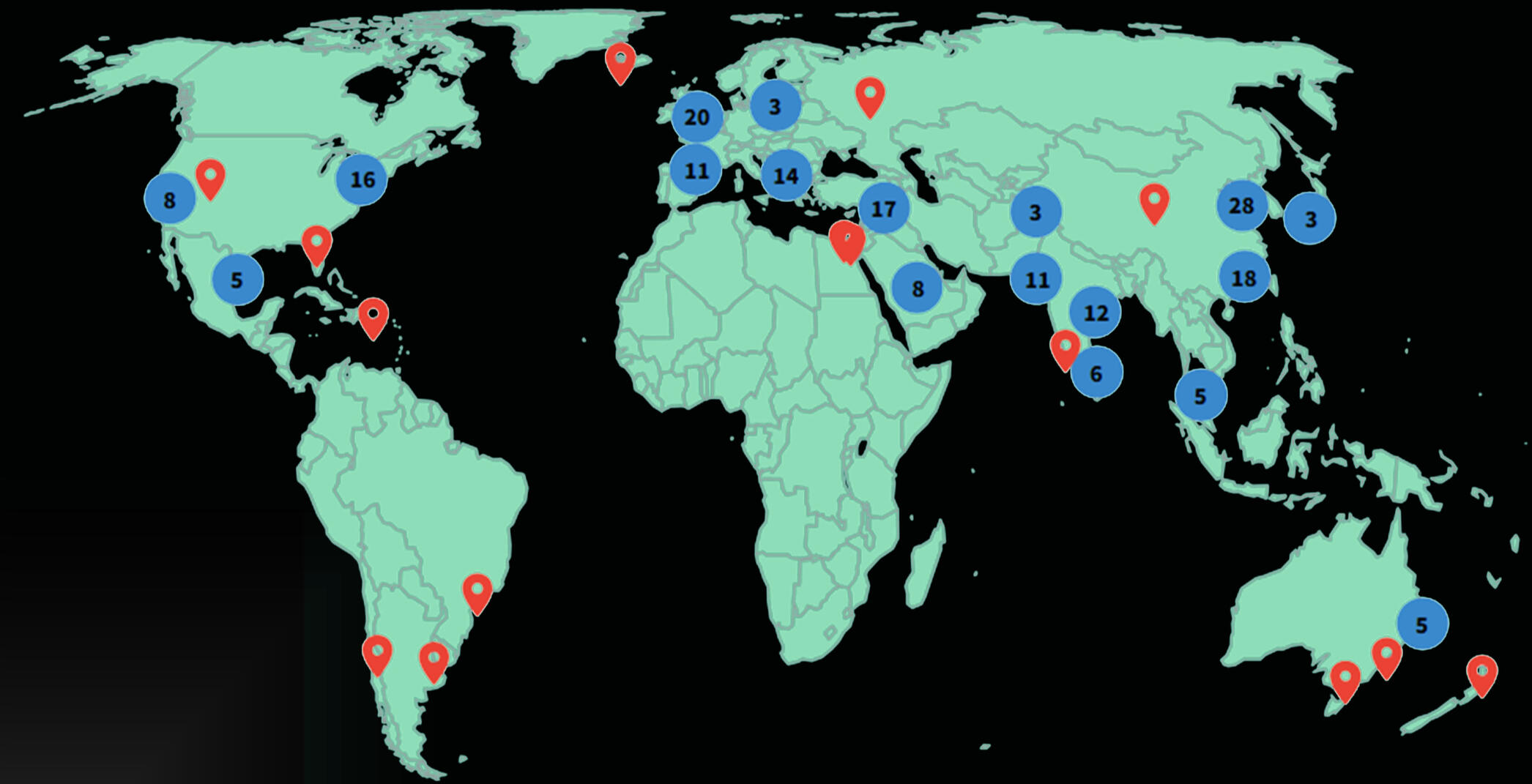

Selected Scholarly Works & Reach

Global citation footprint of my research outputs.

Regional level aggregation of citing institutions based on Web of Science citation metadata (January 2026).

Q1 P. Durongbhan, et al., “Empirical Modelling Workflow for Resolution Invariant Assessment of Osteophytes”, IEEE Transactions on Biomedical Engineering, December 2024. DOI: 10.1109/TBME.2024.3431634Software paper M. T. Kuczynski, et al., “ORMIR_XCT: A Python package for high resolution peripheral quantitative computed tomography image processing”, The Journal of Open Source Software, 9(97), 6084, May 2024. DOI: 10.21105/joss.06084Book chapter; quantitative analysis of Thai prosody P. Tiratanti, & P. Durongbhan, “Reported thought embedded in reported speech in Thai news reports.” in The Grammar of Thinking: From Reported Speech to Reported Thought in the Languages of the World (pp. 239–260). De Gruyter Mouton, 2023. DOI: 10.1515/9783111065830-009Q1 P. Durongbhan, et al., “SPHARM-PDM Based Image Preprocessing Pipeline for Quantitative Morphometric Analysis (QMA) for in situ Joint Assessment in Rabbit and Rat Models,” Scientific Reports, 12 (1), January 2022. DOI: 10.1038/s41598-021-04542-8Q1; top 15% most cited on Scopus Y. Zhao, et al. “Imaging of Nonlinear and Dynamic Functional Brain Connectivity Based on EEG Recordings with the Application on Diagnosis of Alzheimer's Disease,” IEEE Transactions on Medical Imaging, vol. 39, issue 5, pp. 1571-1581, May 2020. DOI: 10.1109/TMI.2019.2953584Q1; top 5% most cited on Scopus P. Durongbhan, et al., “A Dementia Classification Framework Using Frequency and Time-Frequency Features Based on EEG Signals,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 27, no. 5, pp. 826–835, May 2019. DOI: 10.1109/TNSRE.2019.2909100

What have my journey been like?

My Journey Thus Far

I received my initial training in Electrical Engineering at Chulalongkorn University (Thailand), with a focus on signal processing and systems analysis. This foundation shaped how I approach complex data problems—emphasising mathematical rigour, reproducibility, and interpretable modelling.

I later completed a Master’s degree at Cranfield University (UK), where I developed high-resolution EEG and machine-learning methods for dementia diagnosis in close collaboration with clinical neurologists. This experience grounded my work in translational research at the intersection of engineering, data science, and medicine.

During my PhD and postdoctoral work at the University of Melbourne (Australia), my research shifted toward high-resolution biomedical imaging. I developed reproducible analysis pipelines for bone–cartilage and joint tissues using microCT and synchrotron imaging. Across these stages, my work has been united by a single aim: building robust quantitative methods that translate complex imaging and time-series data into meaningful biological and clinical insight.

Selected awards: ANZBMS Christine & T. Jack Martin Research Travel Grant • FEIT Excellence in Teaching & Learning Award (Innovation in Teaching Programs)

© Pholpat Durongbhan. All rights reserved.